India’s Data Center (Part 4/4): Case Study: India's AI Public Infrastructure Initiative as a Catalyst for Data Centre Expansion

24 Feb 2026

Context and Rationale: A Fundamental Policy Shift

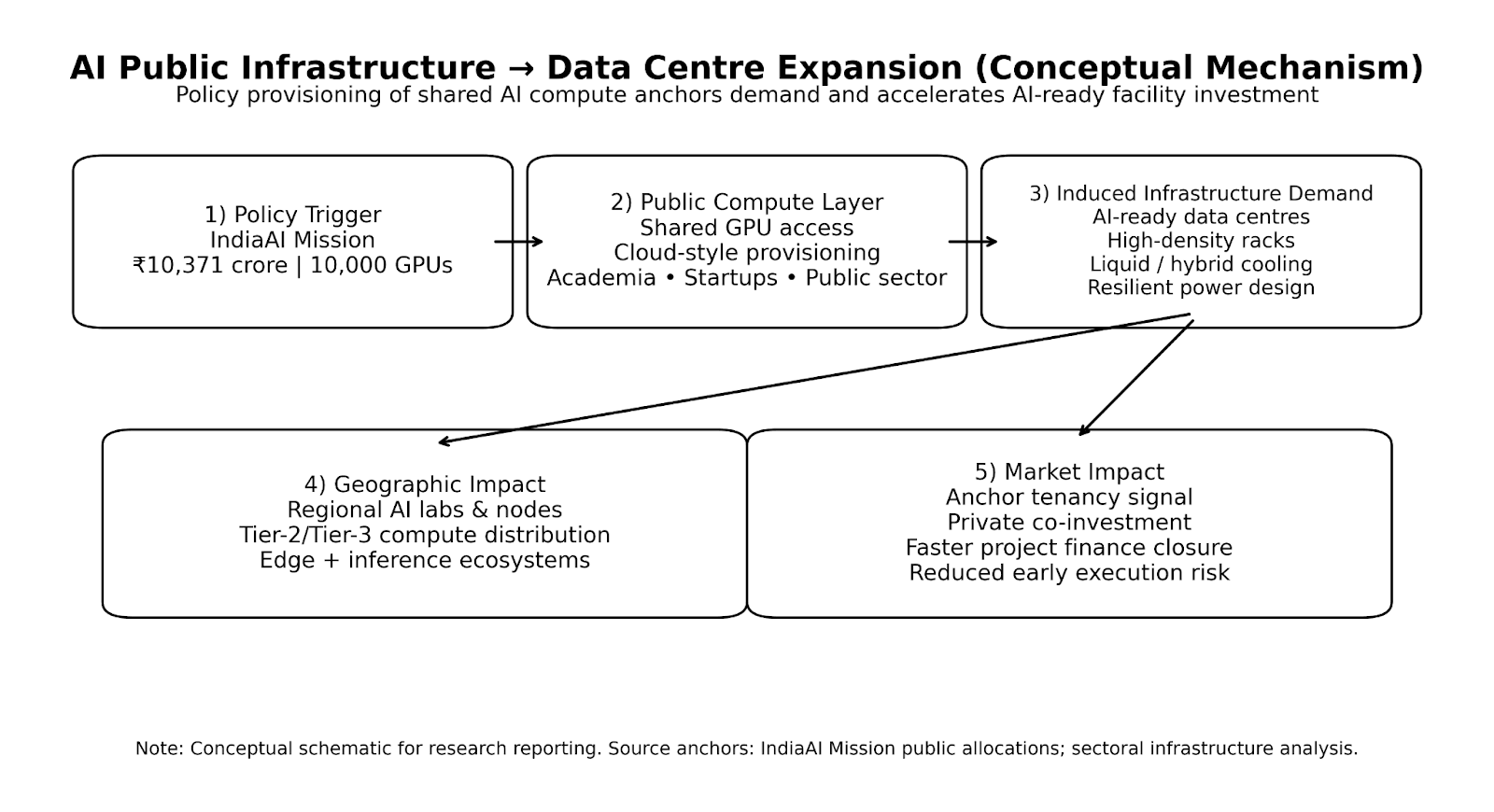

Acknowledging compute as a foundational input for artificial intelligence, the Government of India has launched a public AI infrastructure strategy within the broader IndiaAI Mission. This program represents a departure from previous digital initiatives that emphasised connectivity and applications. Instead, it specifically addresses compute availability, affordability, and geographic distribution as critical constraints on AI adoption.

The initiative marks a significant policy shift by positioning AI compute infrastructure as public digital infrastructure, comparable to roads, power grids, or telecommunications, rather than viewing it solely as a private-sector responsibility. This elevation of compute infrastructure to the status of essential national infrastructure signals the government's recognition that AI capacity constitutes a strategic asset requiring public intervention.

The ₹10,371 Crore Investment in GPU Capacity

A core component of this strategy is the government's allocation of ₹10,371 crore (approximately USD 1.25 billion) for the procurement and provisioning of 10,000 GPUs. These resources are intended to support research institutions, startups, academia, and public-sector applications across education, healthcare, and financial inclusion.

This intervention directly addresses market failures that have constrained AI adoption:

- High Entry Costs: The substantial capital requirements for GPU infrastructure create barriers for smaller organisations and research institutions that cannot justify or afford dedicated AI compute investments.

- Limited Domestic GPU Availability: India's AI compute resources remain concentrated among a small number of hyperscale operators, limiting access for the broader ecosystem of developers, researchers, and enterprises.

- Resource Concentration: The concentration of AI compute capacity among few hyperscale operators creates dependency risks and limits the diversity of AI development pathways available to the domestic innovation ecosystem.

By socialising the capital investment in GPU procurement, the government aims to democratise access to AI compute resources while establishing baseline demand that can stimulate private-sector infrastructure investment.

Infrastructure Design and Implementation Model

Shared-Access Platform Architecture

The AI public infrastructure initiative employs a shared-access, platform-based model in which compute resources are provided through cloud-like interfaces rather than dedicated ownership. This approach fundamentally differs from traditional infrastructure procurement where each institution independently acquires and operates its own computing resources.

The shared-access model reduces barriers to entry for smaller firms and research institutions while maximising the utilisation of costly GPU assets. Rather than individual organisations maintaining underutilised GPU clusters, the platform model enables dynamic resource allocation based on actual workload demands, improving overall asset efficiency.

Geographic Distribution Strategy

Notably, the initiative extends beyond a single centralised facility. Policy documents indicate an intention to support distributed AI laboratories and compute nodes across multiple regions, consistent with broader goals of decentralisation and regional capacity building.

This distributed architecture approach serves multiple strategic objectives: reducing geographic concentration risk, supporting regional innovation ecosystems, and ensuring compute access extends beyond Tier-1 metropolitan centers where existing infrastructure is concentrated.

Creating Anchor Demand for Data Centre Infrastructure

From the perspective of data centers, this model creates anchor demand for AI-optimised facilities equipped with high-density racks, liquid cooling systems, and resilient power architectures. Public procurement of AI compute reduces risk for early-stage infrastructure investment, establishing baseline utilisation that can stimulate private-sector co-investment in additional capacity.

The anchor demand mechanism is particularly important given the capital intensity and technical complexity of AI-ready data center infrastructure. By providing long-term, predictable demand through government procurement, the initiative de-risks private investment in facilities that might otherwise face uncertain utilisation during market development phases.

Implications for the Data Centre Ecosystem

The AI public infrastructure initiative produces several secondary effects on India's data center market, influencing both investment patterns and technical standards across the sector.

First Implication: Accelerating AI-Ready Infrastructure Standards

The initiative accelerates the adoption of AI-ready infrastructure standards, prompting operators to implement higher rack densities, advanced cooling technologies, and enhanced power efficiency. As government facilities establish operational benchmarks for AI workloads, private operators developing competing or complementary capacity must match or exceed these standards.

This standardisation effect raises the technical bar across the entire sector. Data center operators seeking to compete for AI workloads, whether from government, hyperscale, or enterprise clients—must invest in liquid cooling capabilities, high-density power distribution, and other technologies necessary to support modern AI compute requirements.

Second Implication: Geographic Dispersion and Tier-2 Development

By promoting geographic dispersion of AI compute, the initiative strengthens the economic rationale for Tier-2 data center locations, particularly for inference, training, and disaster-recovery workloads associated with regional AI laboratories.

This geographic distribution strategy creates sustained demand in locations that might otherwise struggle to attract hyperscale investment due to connectivity or enterprise proximity considerations. Tier-2 cities with lower land costs, better power access, and state-level incentives become economically viable for AI compute infrastructure when supported by government anchor demand.

Third Implication: Data Localisation Alignment

The initiative aligns with data localisation requirements under the Digital Personal Data Protection Act, thereby reinforcing demand for domestic storage and processing of AI-generated and AI-training data.

In contrast to purely commercial hyperscale expansion, which can pivot geographically based on cost optimisation, public AI infrastructure ensures policy continuity and long-term demand visibility. This reduces exposure to short-term market fluctuations and creates more predictable investment conditions for data center operators developing AI-ready facilities.

The alignment between AI infrastructure policy and data localisation mandates creates compounding demand effects. Organisations must not only store data domestically to comply with DPDPA requirements but also have access to domestic AI compute capacity to process that data, creating integrated demand for both storage and compute infrastructure.

Challenges and Limitations

Despite its strategic importance, the initiative encounters several implementation challenges that could affect execution timelines and ultimate outcomes.

GPU Availability and Supply Chain Constraints

GPU availability is limited by global supply chain constraints. Competition for advanced AI chips among hyperscale operators, international governments, and research institutions worldwide creates procurement challenges and potential delays in infrastructure deployment.

Rapid Technological Obsolescence

Rapid technological obsolescence may shorten asset lifecycles. The fast pace of AI hardware development means that GPU architectures procured today may face performance limitations within relatively short timeframes as newer, more efficient chips become available. This creates ongoing capital requirements for technology refresh cycles beyond the initial procurement.

Complementary Infrastructure Requirements

Effective utilisation requires complementary investments in power reliability, cooling infrastructure, skilled personnel, and software orchestration layers. GPU hardware alone is insufficient—the full stack of supporting infrastructure and human capital must be in place to achieve meaningful utilisation and deliver value to end users.

Power infrastructure must meet stringent reliability requirements for mission-critical AI workloads. Cooling systems must handle the extreme thermal loads generated by high-density GPU clusters. Operations personnel require specialised expertise in AI frameworks, cluster management, and resource allocation. Software orchestration platforms must efficiently schedule jobs and manage multi-tenant access.

Without these supporting elements, GPU assets risk underutilisation or performance bottlenecks that undermine the initiative's objectives.

Governance and Coordination Complexity

Furthermore, coordination among central ministries, state governments, and private data center operators adds governance complexity. Successful execution requires alignment across multiple stakeholders with different mandates, timelines, and priorities.

Central government ministries must coordinate policy, budgets, and procurement. State governments control land allocation, power tariffs, and facility approvals. Private data center operators provide facility development and operations expertise. Aligning these diverse stakeholders around common objectives and execution timelines presents significant organisational challenges.

Measurability and Evaluation Timeline

From a research perspective, measurable outcomes such as utilisation rates, cost reductions, or innovation spillovers will only become apparent over the medium term, limiting the possibility of immediate empirical validation.

Early implementation metrics, procurement completed, facilities commissioned, user registrations, provide progress indicators but do not capture ultimate value creation. True impact assessment requires longitudinal tracking of AI capability development, research outputs, startup formation, and economic value generated through AI applications. This medium-term evaluation horizon creates challenges for near-term policy refinement and iterative improvement.

Research Insight: Public-Private Infrastructure Model

India's AI public infrastructure initiative constitutes an innovative policy mechanism that connects digital sovereignty, innovation capacity, and physical infrastructure development. By socialising early-stage compute investment and relying on private operators for facility development and operations, the model integrates public provisioning with market-based execution.

For the data center sector, this approach serves as both a demand anchor and a technology accelerator, influencing infrastructure design decisions and geographic distribution. The government's role as anchor tenant de-risks private investment in AI-ready facilities, while its technical specifications establish performance benchmarks that elevate standards across the broader market.

More broadly, the initiative demonstrates how governments can shape capital-intensive digital infrastructure markets through targeted public compute investments rather than direct facility ownership. Rather than building and operating data centers directly, the government procures compute capacity from private operators, combining public capital allocation with private operational expertise.

This model preserves market incentives for operational efficiency and innovation while ensuring that public policy objectives, equitable access, geographic distribution, data sovereignty, are achieved. The approach potentially offers a replicable framework for other countries seeking to balance digital sovereignty concerns with market-based infrastructure development.

As India competes globally for AI leadership and seeks to capture economic value from AI-driven innovation, the success of this public infrastructure initiative will significantly influence the country's position in the global AI value chain and its ability to develop domestic AI capabilities across research, enterprise, and public sectors.