citiesabc

Why Demystifying AI Matters for Cities, Economies and Society

20 Jan 2026

From the book "Demystifying AI" by Dinis Guarda

Alan Turing once proposed a deceptively simple test for machine intelligence:

"A computer would deserve to be called intelligent if it could deceive a human into believing that it was human."

Decades later, Stephen Hawking issued a stark warning:

"The development of full artificial intelligence could spell the end of the human race. It would take off on its own and redesign itself at an ever-increasing rate. Humans, who are limited by slow biological evolution, couldn't compete, and would be superseded."

Between these two statements lies the entire terrain we must now navigate, a landscape where artificial intelligence has moved from theoretical speculation to systemic infrastructure. Yet for all its integration into healthcare diagnostics, financial systems, urban planning algorithms and daily productivity tools, AI remains shrouded in misunderstanding. Some view it as magic. Others see it as an existential threat. Most struggle to distinguish between what AI actually does and what science fiction has trained us to expect.

This is not an academic problem. It is a governance crisis, an economic challenge and a societal imperative.

Why Demystification Cannot Wait

In 2023, Geoffrey Hinton, widely regarded as one of the "Godfathers of AI" alongside Yann LeCun and Yoshua Bengi, left his position at Google to speak publicly about the technology he helped create. His concern, expressed in an interview with MIT Technology Review, centered on a fundamental asymmetry:

"If you or I learn something and want to transfer that knowledge to someone else, we can't just send them a copy. But I can have 10,000 neural networks, each having their own experiences and any of them can share what they learn instantly. That's a huge difference. It's as if there were 10,000 of us and as soon as one person learns something, all of us know it. It's a completely different form of intelligence. A new and better form of intelligence."

This is not hyperbole. It is technical description. And it illuminates why demystifying AI has become urgent: the technology operates on principles fundamentally unlike human cognition, yet we continue to anthropomorphise it, regulate it as if it were software, and deploy it as if it were neutral.

Cities are embedding AI into traffic management, energy grids and public safety systems. Economies are restructuring around algorithmic labor, predictive analytics and automated decision-making. Legal systems are grappling with liability when machines make consequential errors. Yet policymakers, business leaders, and even many technologists lack a grounded understanding of what AI is, how it works and what it can and cannot do.

Demystifying AI means stripping away the layers of technical jargon and science-fiction mythology to reveal what lies beneath: a set of mathematical tools and software designed to recognise patterns, analyse data and assist in decision-making. It means understanding that AI is neither magic nor sentient, but it is also not trivial. It is a system with genuine power and genuine risks, shaped by the data it consumes, the objectives it optimises and the humans who deploy it.

What AI Actually Is

At its core, artificial intelligence is the broad field of creating computer systems capable of performing tasks that typically require human intelligence, visual perception, language translation, strategic reasoning. But this definition obscures more than it clarifies.

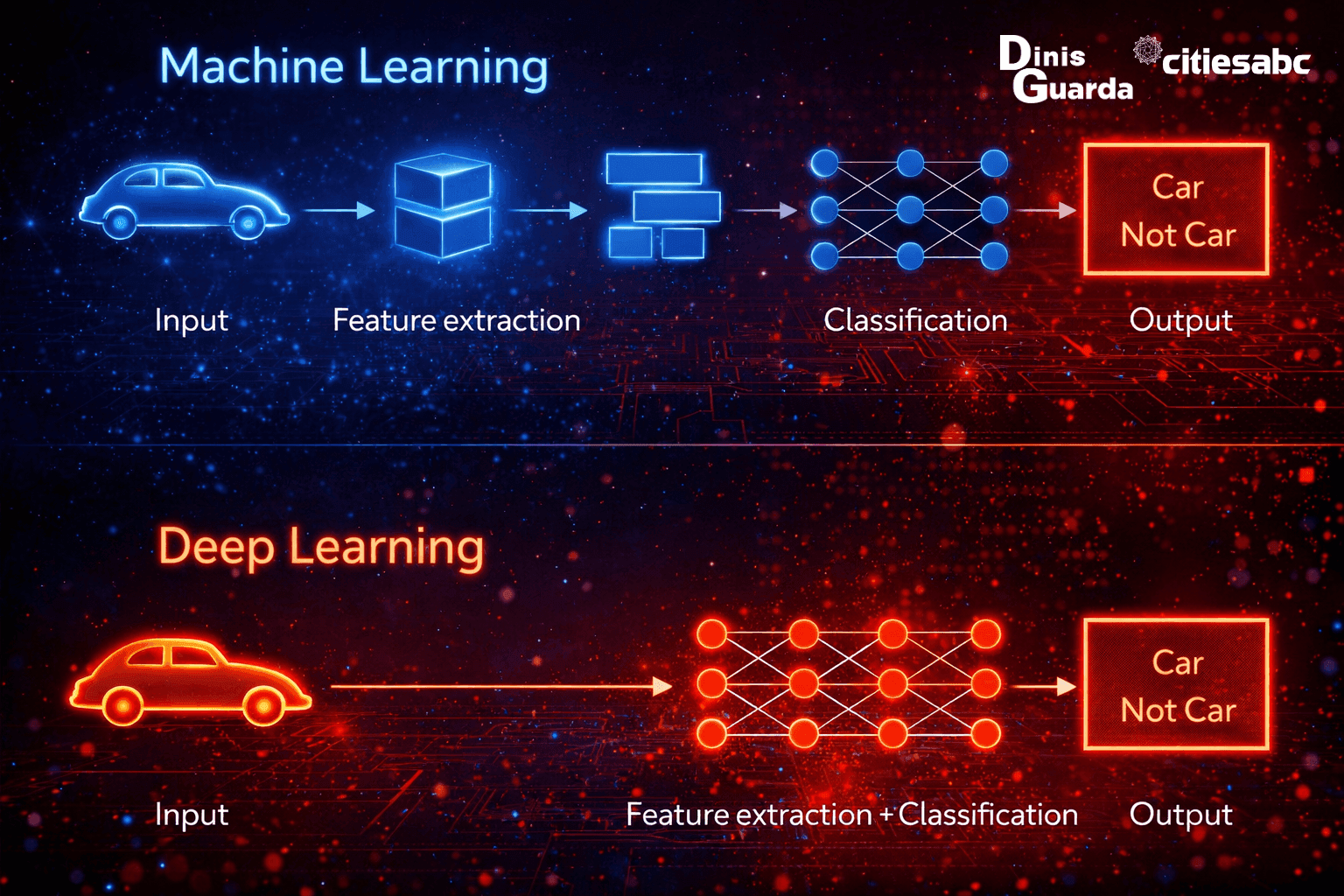

Machine Learning, a subset of AI, represents the shift from explicitly programming every rule to allowing systems to learn from data and improve performance over time. Rather than instructing a computer how to recognise a cat in a photograph through coded rules, machine learning enables the system to infer patterns from thousands of labeled images.

Deep Learning takes this further, using neural networks, computational structures loosely inspired by the human brain, to process complex, high-dimensional information like images, speech and text. This is the architecture behind facial recognition, language models and medical imaging analysis.

Two functional categories dominate contemporary deployment:

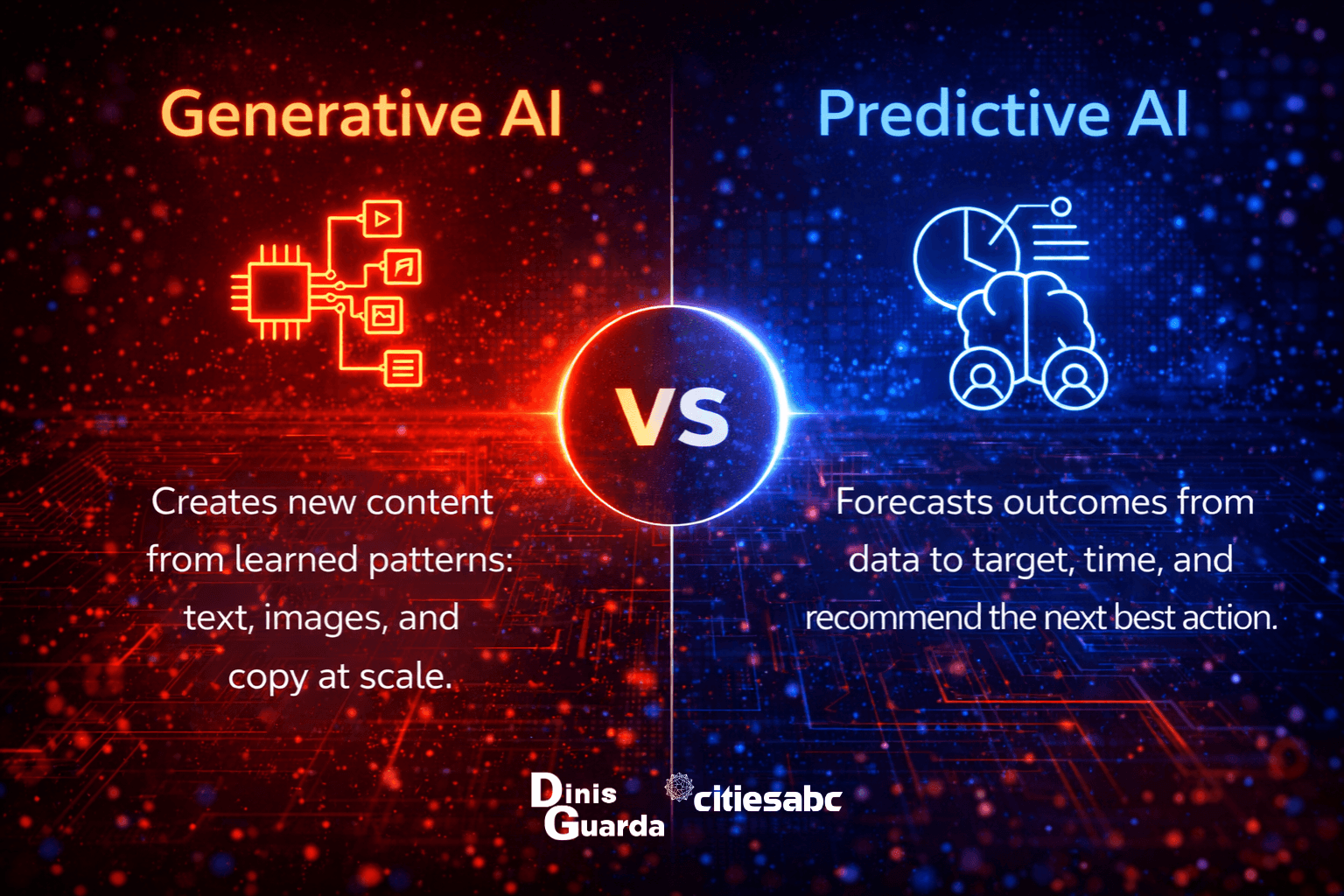

- Predictive AI analyses existing data to identify patterns and generate forecasts. Netflix recommendations, fraud detection in banking and kidney disease prediction all rely on predictive models that find correlations in historical information.

- Generative AI uses existing data to create entirely new content, text, images, music, code. Systems like ChatGPT exemplify this category, producing human-like language by predicting probable sequences of words based on vast training datasets.

Beyond these operational categories lies a more contested concept: Artificial General Intelligence, or AGI. This refers to systems with human-level cognitive flexibility, capable of transferring knowledge across domains, reasoning abstractly and adapting to novel situations. Current AI systems are "narrow," excelling at specific tasks but unable to generalise. AGI remains theoretical, though there is growing consensus within the scientific community that early stages of super-intelligent AGI could emerge between 2027 and 2035.

Myths and Realities

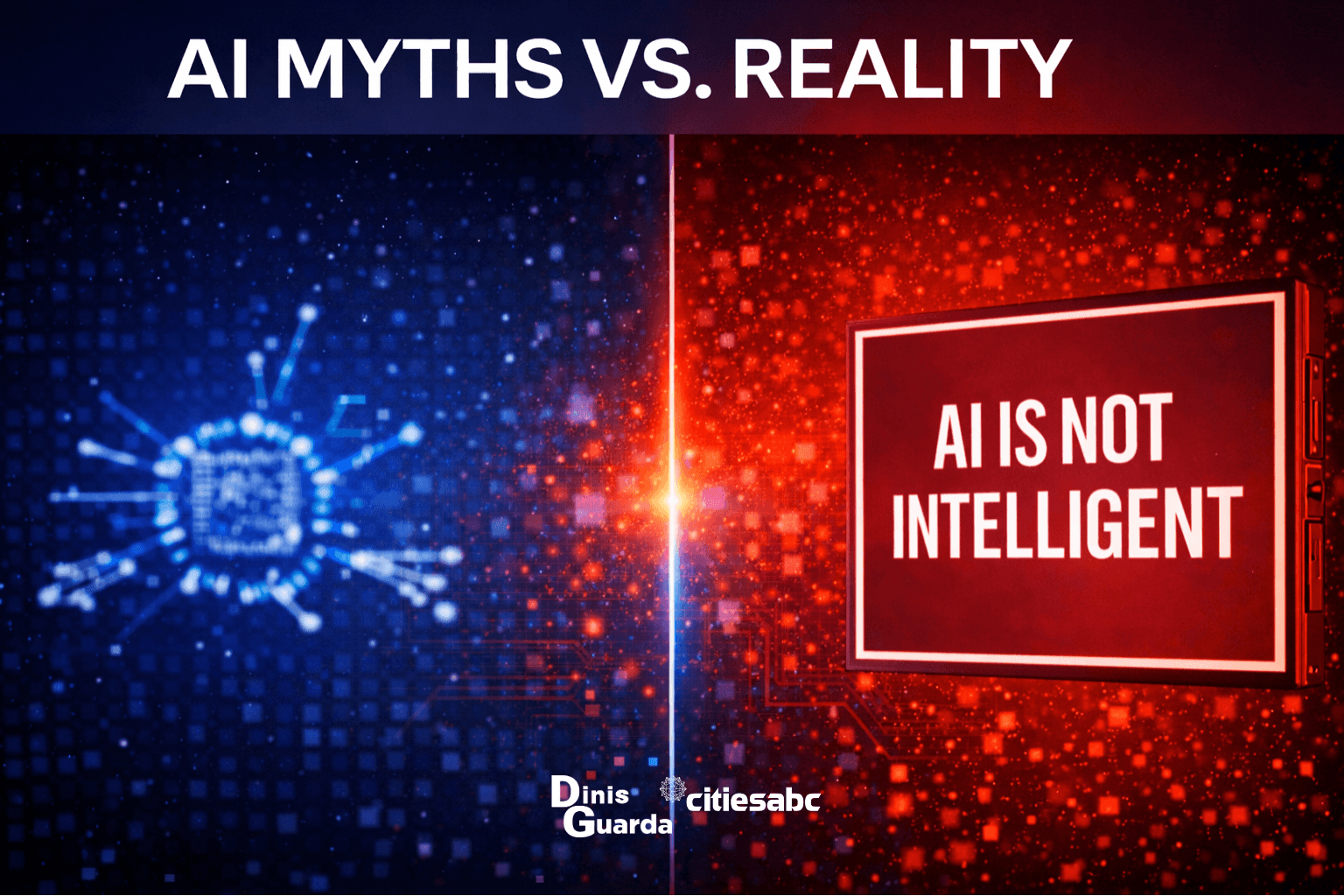

The distance between public perception and technical reality remains vast and this gap has consequences for policy, investment and social trust.

| Myth | Reality |

| AI is magic | It is advanced mathematics, specifically, algorithms that identify correlations in historical data. When a system recommends a product or flags a transaction as fraudulent, it is executing statistical inference, not intuition. |

| AI is sentient | Current systems lack consciousness, intention, or subjective experience. They are Artificial Narrow Intelligence, designed for specific tasks and optimised for specific objectives. They do not "want" anything, nor do they "understand" in any human sense. |

| AI is infallible | Systems routinely "hallucinate," generating plausible but false information, and they inherit biases embedded in their training data. An algorithm trained on historical hiring decisions will replicate historical discrimination. A language model trained on internet text will reproduce internet prejudices. Human oversight and rigorous fact-checking are not optional; they are mandatory. |

Yet it would be equally misleading to dismiss AI as merely a tool. As systems become more adaptive and autonomous, they introduce new forms of interaction, new vulnerabilities, and new forms of manipulation. AI manipulation, the capacity of systems to influence human behavior through personalised content, recommendation algorithms and conversational interfaces, is already documented. The velocity of AI agents' interaction with humans will accelerate these dynamics, reshaping human-machine relationships in ways we are only beginning to map.

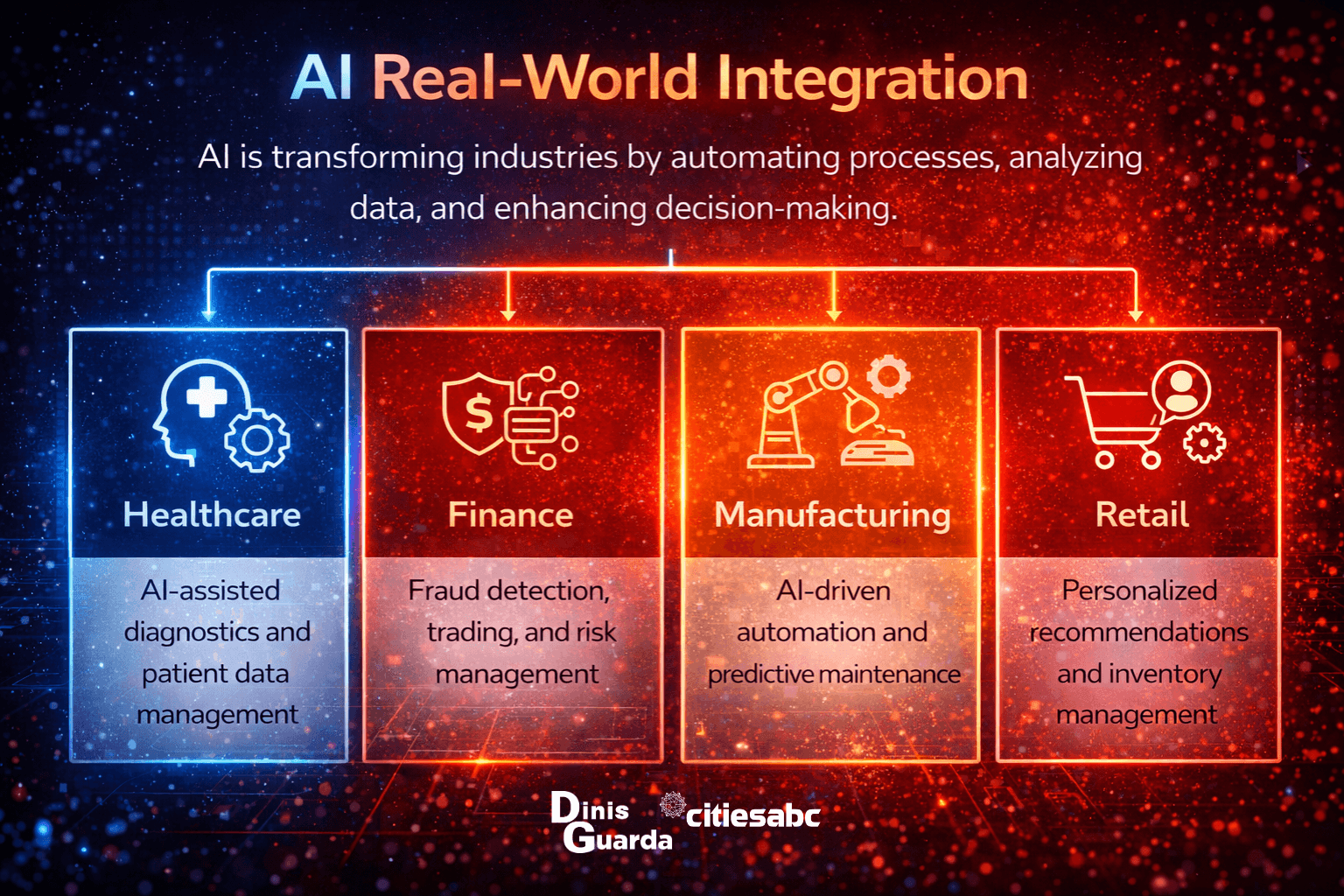

Real-World Integration

AI is no longer experimental. It is operational infrastructure across every sector.

- In healthcare, deep learning models detect diseases like cancer and predict acute kidney injury earlier than traditional methods. Protein structure prediction, powered by AI, has accelerated drug discovery and molecular biology research.

- In legal and financial systems, AI automates contract review, processes insurance claims and identifies payroll fraud, reducing costs while introducing new questions about accountability and due process.

- In education, personalised AI tutors adapt to individual learning paces, offering customised coaching at scale, though questions remain about equity, data privacy and the long-term effects of algorithmic pedagogy.

- In daily work, "AI copilots" draft emails, schedule meetings and summarise lengthy documents, promising productivity gains while raising concerns about deskilling, surveillance and cognitive dependency.

- Cities, in particular, face complex trade-offs. Algorithmic traffic management can reduce congestion but may disproportionately route traffic through lower-income neighborhoods. Predictive policing can optimise resource allocation but may reinforce discriminatory patterns. Energy grid optimisation can enhance efficiency but may introduce cybersecurity vulnerabilities.

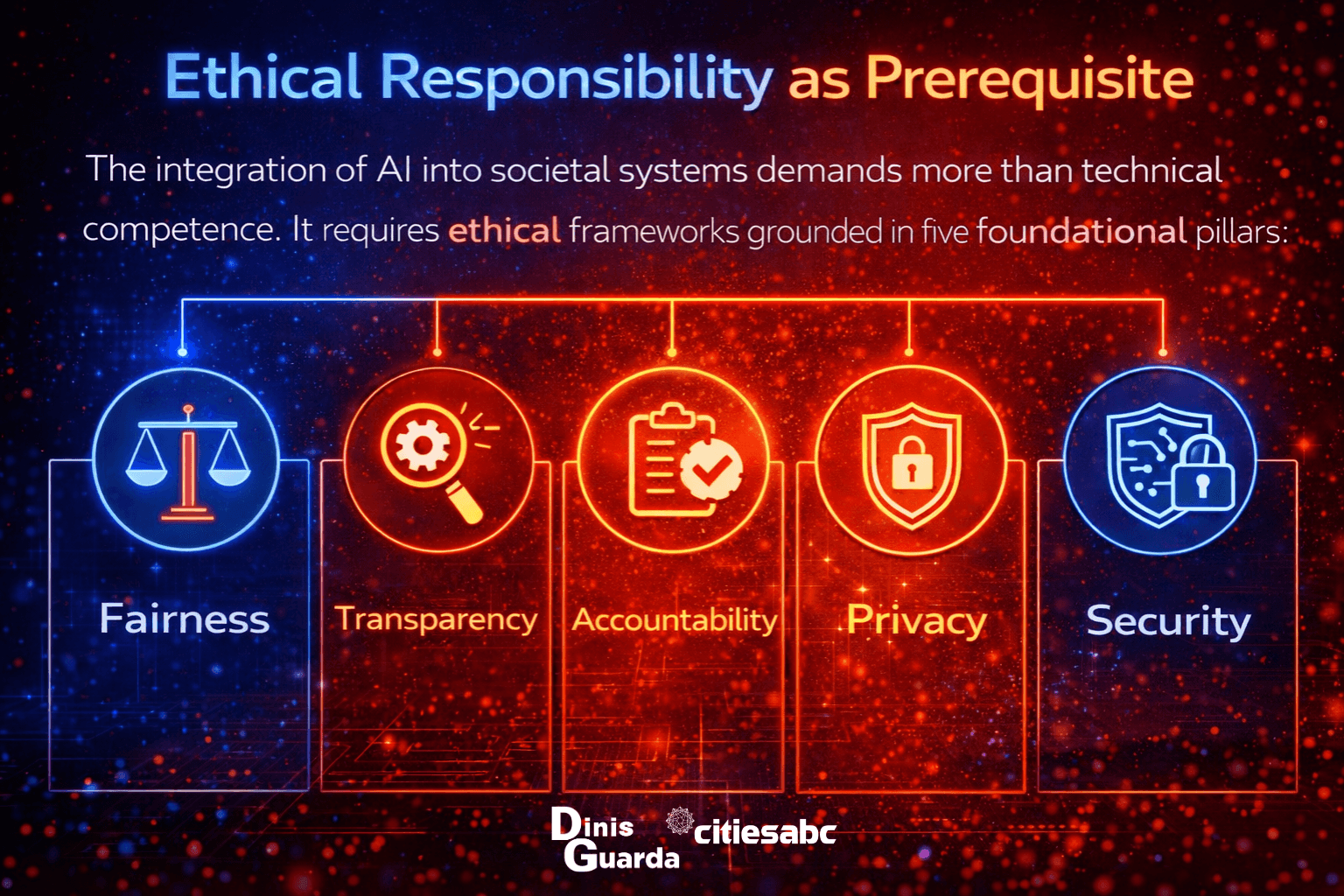

Ethical Responsibility as Prerequisite

The integration of AI into societal systems demands more than technical competence. It requires ethical frameworks grounded in five foundational pillars:

- Fairness requires actively auditing and mitigating algorithmic bias, recognising that "neutrality" is a fiction when systems are trained on historically unequal data.

- Transparency demands disclosure of when and how AI is used, enabling affected parties to understand and contest automated decisions.

- Accountability insists that humans remain responsible for AI-driven outcomes, rejecting the notion that algorithms can serve as shields against liability.

- Privacy protects sensitive user and proprietary data from misuse during training and deployment, particularly as models grow more capable of reconstructing or inferring private information.

- Security implements guardrails to prevent AI from generating harmful, malicious or destabilising content, whether through adversarial manipulation or unintended optimisation failures.

These are not aspirational principles. They are operational necessities. Organisations and governments that fail to embed them will face not only reputational damage but systemic risk.

Looking Ahead

This series will explore the technical, social and governance dimensions of AI with rigor and clarity. The work of Geoffrey Hinton, Yann LeCun, Yoshua Bengio and Fei-Fei Li, the foundational figures of modern AI, moved this technology from speculation to reality. Now the task falls to policymakers, technologists, business leader and citizens to understand it well enough to govern it wisely.

AI is neither savior nor apocalypse. It is a powerful, evolving system shaped by human choices, choices about data, deployment, oversight and values. Demystifying it is the first step toward making those choices responsibly.

In the next article, we will examine a fundamental paradox: how AI systems recognise patterns with remarkable accuracy while understanding nothing at all and why this distinction matters for everything from medical diagnosis to criminal justice.